I hate energy efficiency.

Now, before you send the Twitter hordes after me, I'll explain. When you hear “energy efficiency,” what do you think of? The uncomfortable hue of a compact fluorescent bulb? Hearing the office AC cut out promptly at 5pm? Being a little colder than you might like in January?

Don’t get me wrong, I can’t stand wasting resources—including energy. It’s just that “energy efficiency” frequently connotes sacrifice, giving something up. It’s certainly appropriate in many situations. It just isn't the best descriptor when it comes to IT.

When Dell drafted our 2020 Legacy of Good Plan, we deliberately chose to focus on “energy intensity” for a product portfolio energy target. ‘Intensity’ is a term more common in the economist’s lexicon than the IT professional’s. But, it does a better job of describing consequences and requirements. If you have to do this much work, you’re going to need that much energy.

Our goal is to reduce the energy intensity of our product portfolio by 80 percent by 2020, compared to our 2011 baseline (see our other goals). And we’re a good part of the way there. If running a set of workloads consumed 100 kilowatt-hours of energy in 2011, that same work would only take 57 kWH today. Our customers (usually) will describe what they need to do, then ask about energy consumption. They typically don’t tell us how much energy they have, then ask us how much compute they can buy (although there are always a few exceptions).

So, we’ve made progress, but, what does that mean for you?

Customers want to do more, and need to do more, with their technology

What this means for you depends on who you are and how you use IT. One thing seems to be common, though–we are all expecting more out of our IT.

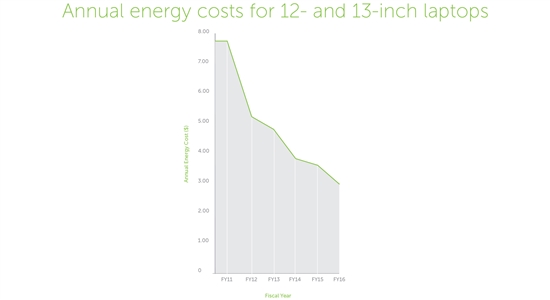

If you’re a laptop user, reducing energy intensity is not going to impact your wallet much. Sure, powering the Dell laptop you bought last year costs less than half what it cost to power your 2010 machine. You should be able to buy a cup of good coffee with the savings. But probably a lot more important than the $3.10 you’ll spend to power that laptop this year is the fact that you’ve got the processing power to run the new applications or games you need. Or that your battery is going to last a lot longer on a single charge.

A Dell laptop purchased in FY16 only costs approximately $3.10 per year to power, compared to $7.03 per year for a model purchased during FY11 (based on average power consumption, average U.S. electricity prices and typical annual usage).

What about the data center? In 2007, the EPA released a report on server and data center energy consumption that set off alarm bells. Data center energy consumption was a significant share of all electricity produced in the U.S., and that share was growing. But a lot can happen in a few years. Lawrence Berkeley National Lab recently updated the 2007 report with new data. Even though server and data center energy consumption increased 90% between 2000 and 2005, it only increased 24 percent from 2005 to 2010. And from 2010 to 2014? Only a modest 4 percent increase. Still, about 1.8 percent of all U.S. electricity consumption went to data centers in 2014.

The new report cites a number of reasons for why earlier dire predictions of runaway energy consumption failed to materialize, including:

- Reduced growth in the number of servers operating in data centers

- The amount of power demanded by each server has stayed fairly constant since 2005

- There have been efficiency improvements in power and cooling, storage and networking

- Virtualization and better utilization in general have reduced wasted energy

- The shift to hyperscale (and highly efficient) data centers is replacing inefficient smaller data centers

While demand for computing continued to increase, the industry was able to meet that demand by reducing the energy intensity of IT by almost an equal amount.

If we hadn’t reduced our energy intensity, if we hadn’t improved utilization, the consequences would have been significant. Between past and future needs we would have had to find an additional 600 billion kWh of electricity to support U.S. compute demand from 2010 to 2020.

When you consider the impact of avoiding 600 billion kilowatt-hours of electricity, you start to see some of the ways this really matters. According to the U.S. EPA, using that much electricity in the United States will generate approximately 422 million metric tons of “carbon dioxide equivalent”—meaning it represents the greenhouse gas potential that is equivalent to that amount of carbon dioxide.

Don’t worry—hardly anyone can look at that number and know what it means. Thankfully, the EPA provides a few equivalencies of their own to make sense of it. For example, it would create the same amount of carbon dioxide equivalent created by adding 5.5 million new homes to the mix for the same 10-year period.

Want to soak up all that carbon dioxide? Plant trees. The EPA calculates you would need to have planted nearly 11 billion trees in 2010 and grown them for those 10 years to absorb that much. That’s more trees than there are in Germany, Belgium, Luxembourg and the Netherlands combined.

Customers are leaving a smaller environmental footprint

Our goal of reducing energy intensity by 80 percent was recognized by CDP as “science-based target,” consistent with the level of decarbonization required to keep the global temperature increase below 2°C compared to pre-industrial temperatures. Since we started the goal in 2011, we estimate we have helped our customers avoid nearly 3 million metric tons of carbon dioxide equivalent—the same as taking more than 600,000 cars off the road for a year.

High performance computing (HPC) is helping us achieve net positive results

Our focus on energy means our products can do more. More compute. Faster processing. A great example of this comes from our work with the Translation Genomics Research Institute (TGen). They work with children who have neuroblastoma, a rare and often deadly form of pediatric cancer. There are treatments that are proving effective. Problem is, in some cases, certain treatments do more harm than good. By analyzing a child’s already sequenced genome, researchers can identify important mutation markers that point to which type of treatment will yield the best results.

The challenge was that the analyzing process took weeks. They had to start treatment before the results were ready, hoping for the best.

Dell donated the HPC technology, funding and expertise to help change that. We’ve helped TGen reduce the amount of time needed to analyze the 90 billion datapoints in a child’s sequenced genome to find and evaluate those mutations in 8 hours instead of weeks. This means doctors can act on the information before they start treatments, giving kids a much better chance. For TGEN, having reliable HPC nodes was literally a matter of saving lives.

You may have the horsepower, but if you don’t have the feed for the horses, you’re not going anywhere. And we reduce how much feed you need by providing energy efficient computing.

Yeah, I said something real close to “energy efficiency.”

You know, I can live with the term–as long as we agree that it’s not only what you can save, but also what you can enable that is important.

To learn about other ways Dell’s Legacy of Good goals are paying off for customers, communities and the planet, visit www.dell.com/legacyofgoodupdate.

Dell is committed to providing customers with solutions that give them the power to do more while consuming less. Learn more here.