Recently, I’ve been reading a lot about "High frequency trading" (HFT). It seems to aid in security trading in the US by enabling high-speed frequency trading using high-spec computers. This brought to mind the de-duplication function within Data Domain, which also relies on CPU spec and can store a lot of data with very small disk drives.

The reason why Data Domain can store so much data with small disk drives is that Data Domain systems de-duplicate our customers’ data prior to storing it to their disk drives, to reduce the write data. The foundation for the de-duplication “Stream Informed Segment Layout (SISL)” enables Data Domain systems to perform 99% of the de-duplication processing in CPU and RAM. So, the de-duplication needs CPU resource instead. Fortunately, over the last 20 years, CPUs have improved in speed by a factor of millions following Moore’s Law, while disks have improved by about 10x. This means that future Data Domain systems will continue to realize dramatic improvements in speed and scalability as future CPUs are used in new Data Domain systems. As new technology is introduced, many of our systems will enable you to replace the controller with a next generation model, while leaving all the backup data in place. This investment protection ensures you can significantly improve backup performance and scalability without disrupting operations.

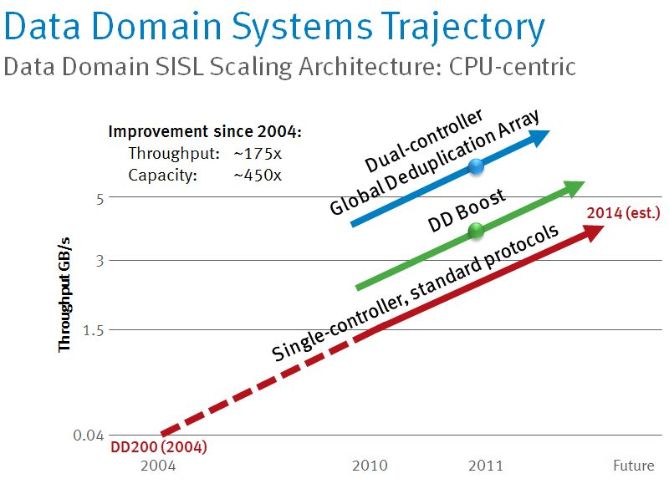

Having the above benefit, since 2004, Data Domain systems have increased 175-times in throughput and 450-times in capacity and EMC expects this trajectory to continue over time.

Hiroki Kobayashi

IT Social Engagement

Follow us @EMCSupport