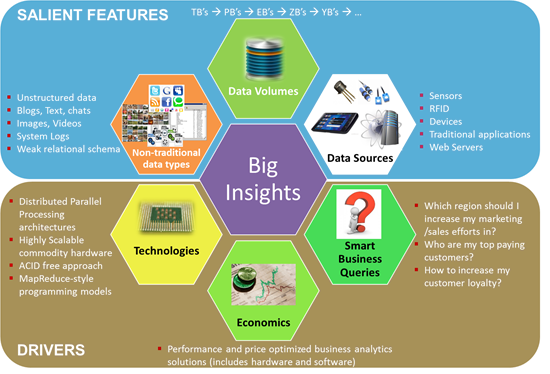

Over the last year, many industry analysts have tried to define Big Data. Some of the common dimensions that have been used to define Big Data are the 3 V’s, Volume, Velocity and Variety. (Volume = multiple terabytes or over a petabyte; variety = numbers, audio, video, text, streams, weblogs, social media etc.; velocity = the speed with which it is collected). Although the 3 V’s do a good job as parameters for Big Data there are other things at play that need to be captured to understand the true nature of Big Data. In short, to describe the data landscape more holistically, we need to step beyond the 3 V’s. While the 3V’s are better classified as the salient features of the data, the real enablers of the Big Insights are technology, economics and business decisions that enable extracting tangible value from the data.

In this discussion I will take a closer look at some of the drivers of Big Insights.

Technologies:

Big Data analysis requires processing huge volumes of data sets that are non-relational with a weak schema, at an extremely fast pace. This need sparked a sudden emergence of technologies like Hadoop that help to pre-process unstructured data on the fly and perform quick exploratory analytics. This model breaks away from the traditional approach of using procedural code and state management to manage transactions.

Along with new preprocessing technologies we have also seen the growth of alternate DBMS technologies like NoSQL and NewSQL that further help to analyze large chunks of data in non-traditional structures (for example using trees, graphs, or key-value pairs instead of tables.)

Other changes are happening on the infrastructure side of things. High performance and highly scalable architectures have been emerging. They include parallel processing, high-speed networking and fast I/O storage, which further help to process large volumes of data at a higher MB/s rate.

In addition to the technological changes we are also witnessing a fundamental paradigm shift in the way DBA’s and data architects are analyzing data. For example, instead of enforcing ACID (atomicity, consistency, isolation, durability) compliance across all database transactions we are seeing a more flexible approach on using ACID in terms of enforcing it whenever necessary and eventually designing a consistent system in a more iterative fashion.

Economics:

The emergence of these new technologies is further fueled by the economics associated with providing highly scalable business analytics solutions at a low cost. Hadoop comes to mind as the prime example. A valuable white paper that describes how to build a three node Hadoop solution using a Dell OptiPlex desktop PC running Linux as a primary machine can be found here. The solution was priced under $5,000 USD.

These kinds of economics are driving a faster adoption of new technologies using off-the-shelf hardware, thus enabling even a research scientist or a college student to easily re-purpose hardware for trying out new software frameworks.

Smart Business Queries:

Making decisions in a timely manner is critical for survival of businesses. Today even more so with the growing amount of data available and the associated complexity of managing a business. So asking the right questions and making the right investments into BI technologies and processes is key to present and future information driven businesses.

Business Insights:

I cannot stress enough the importance of business insights, also highlighted in my previous blog post Business Intelligence: The Big Picture. As enterprises keep getting smarter at managing their data, they must realize that no matter how small or big their data set is, the true value of the data is realized only when they have produced actionable information (insights)! With this in mind, we must view the implementation of Big Data architectures as incomplete until the data has been analyzed to report out the actual actionable information to its users. Some examples of successful business insights implementations include (but are not limited to):

Recommendation engines: increase average order size by recommending complementary products based on predictive analysis for cross-selling (commonly seen on Amazon, Ebay and other online retail websites)

- Social media intelligence: one of the most powerful use cases I have witnessed recently is the MicroStrategy Gateway application that lets enterprises combine their corporate view with a customer’s Facebook view

- Customer loyalty programs: many prominent insurance companies have implemented these solutions to gather useful customer trends

- Large-scale clickstream analytics: many ecommerce websites use clickstream analytics to correlate customer demographic information with their buying behavior.

The takeaway here is that enterprises should remain focused on the value their data can provide in terms of enabling them to make intelligent business decisions. So it important to have this holistic view that does not emphasize certain parameters related to Big Data to the detriment of others. In other words businesses have to keep in the mind the big picture. So how do you measure the impact of a Big Data implementation for your organization?

Connect with the author, @shree_dandekar on twitter.