Big Data has exposed the need for deeper data insights through predictive analytic techniques such as data mining, machine learning, and modeling. The interesting thing to note is that predictive analytics has been around for a long time, used by a select few, in select organizations. Its value has always been recognized and applauded, but its true potential never fully realized due to lack of widespread adoption, as well as issues around data accessibility, performance, statistical expertise, business sponsorship, cost, and more. In fact, nearly 90 percent of organizations that do employ predictive analytic software agree that it has given them a competitive advantage, according to a new survey.

The advent of Big Data has driven the uptake of predictive analytics due to the curiosity of very capable Data Scientists, along with new tools and technologies from companies such as Alpine Data Labs. Alpine Data Labs provides next generation predictive analytics to address legacy issues and meet the new demands of Big Data. But more importantly, Alpine Data Labs is mainstream-oriented whereby business users, not just statisticians and Data Scientists, are compelled to mine data.

Backed by $16M in Series B funding, Alpine Data Labs is getting some serious momentum in the Big Data analytics startup space, offering zero coding for creating and deploying complex predictive models on Hadoop. I spoke with Alpine Data Labs CEO Joe Otto to talk about their game changing approach to predictive analytics for Big Data.

1. Lets first talk about leading predictive analytics incumbents such as SAS, IBM SPSS, and other analytics vendors who got their start years ago with desktop and server software designed for data mining and advanced analytics. How has Alpine Data Labs overcome the issues around these incumbent technologies and address the new needs of Big Data?

In the pre-Big Data era, these incumbent technologies were designed for specialized users to work on samples of structured data sets that were delivered to them by IT. Now, in the era of Big Data, users don’t have the patience for this paradigm – they want fast access to all of the data, both structured and unstructured, and have the self service capabilities to analyze what they need. There is also an expectation for everyone in the organization to be data driven in order to maximize the value of Big Data. This requires a broader class of people who are not specialists to participate in data analysis, which these incumbent technologies do not facilitate.

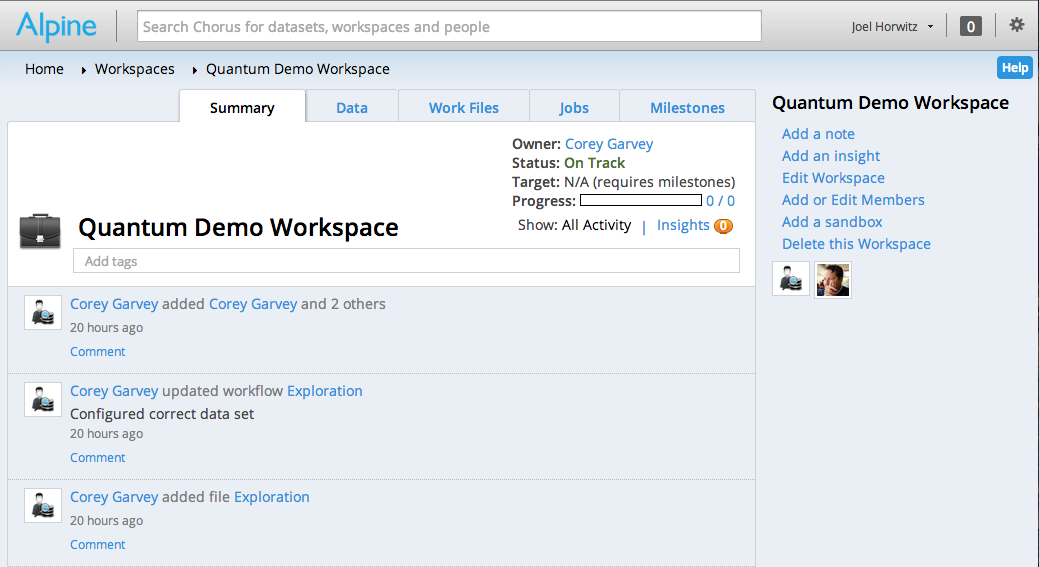

Alpine Data Labs has built next generation predictive analytics software for the Big Data era – one that’s self service and flexible, with collaboration and knowledge sharing built into every step of the analytic process. Data scientists, analysts, and front line workers use the same tool to easily communicate on data and insight, working together towards a common goal – all with enterprise visibility so the entire organization becomes data driven. From a technical perspective, Alpine Data Labs takes advantage of high performance analytic processing systems such as Hadoop and MPP databases to enable users to build and deploy complex predictive models directly against where the data natively resides – all without having to write a single line of SQL or MapReduce code, for example.

2. What are the supported data platforms and what makes Alpine unique to other Big Data application vendors such as Datameer and Platfora?

Our signature is in Data Science for Hadoop and Big Data. We provide predictive analytics software not just visualization tools. Our solution is also an end-to-end data application that works in hybrid environments, combining traditional data sources such as MPP databases (i.e, Pivotal Greenplum Database) and new sources like Hadoop. This makes our offer that more relevant as a big portion of the market is looking to use algorithms across all data types.

3. The predictive analytics or Data Mining process involves some data exploration, ETL processing or data preparation, model building, testing, and deploying. How does Alpine improve Data Mining process?

There are three ways we improve the data mining process for faster, more accurate predictive analytics – collaboration, code free, and no data movement. The collaborative features bring people of various business and technical expertise together in one place to ensure the right data, predictive models, and insights are delivered. Users have direct access to same data set where the data natively resides for high performance, more accurate model building. And all steps of the analytic process – data transformation, feature creation, model building and deployment – can be done by a non technical person through a point and click, drag and drop web user interface.

4. There has been a very close relationship with EMC since Alpine Data Labs was spun off from EMC Greenplum in 2010. What is the state of the partnership today with Pivotal, the new Big Data company fused by Greenplum and VMware technologies?

EMC was one of the original investors in Alpine Data Labs and is still an investor. We share the same vision for Big Data with Pivotal, especially when it comes to the need to tie deep data science capabilities with collaboration. This is the key to maximize the value of Big Data since only through continuous communication between the data scientist and business front line worker can a more accurate predictive model be delivered. And when you combine this with enterprise visibility, the entire organization works as a single unit, continuously sharing knowledge and leveraging insight to maximize value and become data driven.